AI Won’t Fix Government Until Government Fixes Itself

Governments are racing to adopt AI, but their biggest obstacle isn’t the technology- it’s themselves. Beneath talk of innovation lies a maze of outdated systems, siloed data, and tangled rules that keep progress crawling. AI doesn’t fix dysfunction; it magnifies it. The real test isn’t whether machines can govern- it’s whether our institutions are ready to.

Structural Challenges in Government Technology Systems

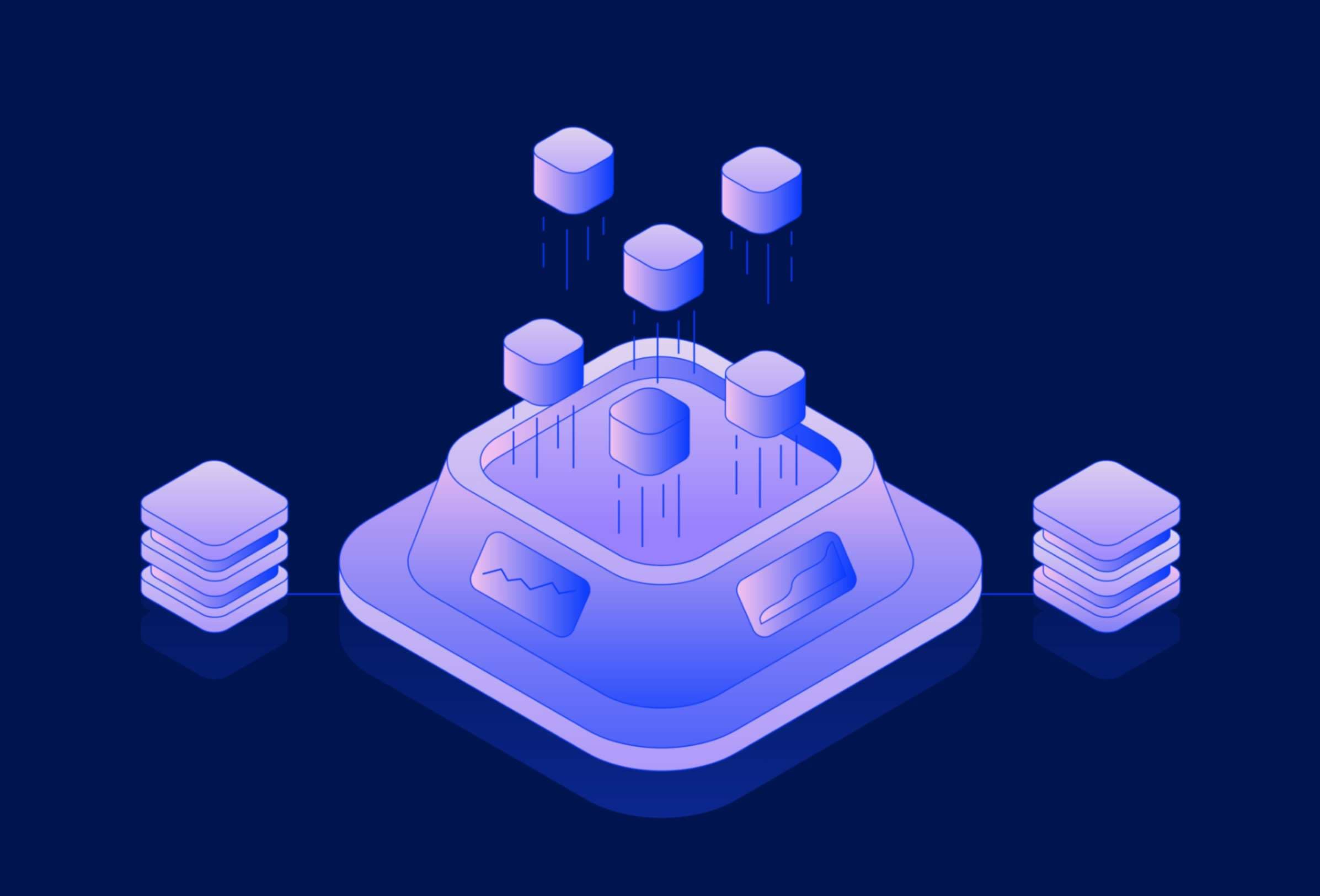

Before the integration of artificial intelligence into government operations, agencies were already contending with long-standing structural limitations. Fragmented data systems remain one of the most significant barriers to integrated service delivery. Many departments operate in silos, relying on legacy databases that are incompatible with modern platforms or with each other. This fragmentation limits the ability of agencies to share data securely and efficiently, which is essential for AI applications that depend on large, high-quality datasets to function effectively. Without foundational interoperability, even the most advanced AI tools will fail to deliver meaningful insights or equitable outcomes.

Legacy infrastructure compounds the issue by limiting the capacity to upgrade systems or deploy new technologies at scale. Many agencies still rely on outdated software, hardware, or vendor contracts that are difficult to modernize without substantial investment. This technical debt not only slows innovation but also increases the risk of cybersecurity vulnerabilities. Inconsistent policy adoption across departments adds another layer of complexity, especially when different teams interpret digital standards or data privacy regulations differently. These inconsistencies create uneven experiences for residents and complicate efforts to monitor or audit AI systems across jurisdictions. Finally, a shortage of trained personnel capable of managing and evaluating AI tools makes it difficult for agencies to build sustainable, ethical governance practices around these technologies.

AI’s Pressure on Transparency, Bias, and Accountability

Artificial intelligence intensifies existing governance challenges by introducing systems that are often opaque, difficult to audit, and prone to reproducing systemic bias. Algorithmic decision-making, particularly in areas such as housing, policing, and benefits eligibility, can embed historical inequalities into automated processes if not properly monitored. Without clear documentation and public disclosure of how algorithms function and what data they rely on, it becomes nearly impossible for residents to understand how decisions affecting them are made. This opacity erodes public trust and limits opportunities for democratic oversight.

Accountability mechanisms are often not designed to accommodate the complexity of AI systems. Traditional performance audits or compliance reviews may not capture the nuances of algorithmic behavior. Furthermore, many AI models evolve over time through machine learning, making their decision logic a moving target. This dynamic nature complicates oversight and raises questions about who is responsible when an AI system produces a harmful or unfair outcome. Studies have shown that AI tools used in public settings, such as predictive policing or child welfare risk assessments, have exhibited racial and socioeconomic bias when not carefully evaluated¹. To navigate these risks, agencies need not only technical tools but also robust policies that clarify roles, responsibilities, and redress mechanisms.

Emerging Governance Models for Responsible AI

In response to these challenges, several public institutions are beginning to adopt structured governance models tailored to AI oversight. One such model is the establishment of algorithmic oversight committees composed of interdisciplinary experts, community representatives, and technologists. These committees review the deployment of AI tools, advise on ethical guidelines, and recommend adjustments based on community impact. For example, New York City’s Automated Decision Systems Task Force was one of the first initiatives to examine how algorithms affect fairness and transparency in urban governance². While its implementation faced limitations, the framework it proposed remains instructive for other jurisdictions.

Another promising approach is the use of algorithmic impact assessments (AIAs), which require agencies to evaluate the potential risks and benefits of AI systems before deployment. These assessments typically address issues such as data provenance, stakeholder engagement, and potential disparate impacts. In conjunction with AIAs, documentation standards like model cards and datasheets for datasets help provide transparency into how systems were built and what limitations they may carry³. Equity audits, conducted either internally or by third parties, add another layer of scrutiny by examining how AI systems perform across different demographic groups. Finally, human-in-the-loop protocols ensure that critical decisions are not made solely by automated systems, preserving human judgment in contexts that demand empathy, discretion, or contextual awareness.

Applying a Governance-First Lens to AI Integration

The rapid deployment of AI in public institutions is not a shortcut to modernization. It is a comprehensive stress test for governance capacity. Cities and agencies that focus solely on technological adoption without policy alignment will likely encounter unintended consequences. AI exposes the weaknesses in governance infrastructure, from outdated procurement rules to insufficient public engagement practices. This is why governance must precede and shape digital transformation initiatives. Jurisdictions that proactively build ethical, transparent, and equitable oversight mechanisms will be better positioned to harness AI’s benefits while minimizing harms.

Agencies should begin by evaluating their AI systems against emerging governance frameworks. This includes assessing whether tools have undergone impact assessments, whether public documentation is available, and whether feedback mechanisms allow for meaningful resident input. Developing a Responsible Public Sector AI policy guide is another essential step. Such a guide should outline principles, procedures, and compliance standards tailored to the specific operational and ethical risks each agency faces. By embedding governance into the core of their digital strategies, institutions can ensure that AI serves the public interest rather than undermining it.

Engaging in Collective Learning and Capacity Building

No single agency can address the complexities of AI governance in isolation. Participating in collaborative forums, such as interagency roundtables on algorithmic transparency, allows practitioners to share lessons learned, identify common challenges, and co-develop best practices. These engagements also help elevate the voices of communities affected by automated decision-making, ensuring that governance frameworks reflect lived experiences and not just technical feasibility.

Agencies should consider joining national or regional initiatives focused on digital accountability in government. For instance, the Government Accountability Office in the United States has released frameworks for assessing AI accountability and bias mitigation⁴. Similarly, the Urban Institute and the Centre for Democracy & Technology offer resources and convenings that support public agencies in developing responsible AI strategies⁵. These platforms provide the ongoing education and peer support necessary for navigating the evolving AI landscape. Building internal capacity through training programs, fellowships, and cross-disciplinary hiring will also be crucial to sustaining long-term governance practices.

The Future of Digital Government Depends on Oversight

AI will not decide whether government evolves. Governance will. Institutions that integrate disciplined oversight into their digital transformation efforts will remain credible, transparent, and resilient. Those that fail to do so may find themselves governed by the very systems they intended to control. The stakes are high, but the path forward is clear: prioritize governance, invest in ethical infrastructure, and engage communities in shaping the future of automated public services.

The municipalities that act today to build transparent, accountable, and equitable AI systems will set the precedent for responsible innovation. This is not just a technical challenge. It is a policy imperative. Agencies must move beyond experimentation and into deliberate, structured governance. AI is not replacing government. It is testing it. And the results will depend entirely on how well we govern, not how quickly we automate.

Bibliography

Angwin, Julia, Jeff Larson, Surya Mattu, and Lauren Kirchner. "Machine Bias." *ProPublica*, May 23, 2016. https://www.propublica.org/article/machine-bias-risk-assessments-in-criminal-sentencing.

New York City Automated Decision Systems Task Force. *Automated Decision Systems Task Force Report.* New York City, November 2019. https://www.nyc.gov/assets/adstaskforce/downloads/pdf/ADS-Report-11192019.pdf.

Mitchell, Margaret, Simone Wu, Andrew Zaldivar, Parker Barnes, Lucy Vasserman, Ben Hutchinson, Elena Spitzer, Inioluwa Deborah Raji, and Timnit Gebru. "Model Cards for Model Reporting." *Proceedings of the Conference on Fairness, Accountability, and Transparency*, 2019. https://doi.org/10.1145/3287560.3287596.

United States Government Accountability Office. *Artificial Intelligence: An Accountability Framework for Federal Agencies and Other Entities.* June 2021. https://www.gao.gov/assets/gao-21-519sp.pdf.

Urban Institute and Center for Democracy & Technology. *Algorithmic Accountability Policy Toolkit.* 2020. https://www.urban.org/research/publication/algorithmic-accountability-policy-toolkit.

More from Public Policy

Explore related articles on similar topics