Facial Recognition in Policing: Balancing Public Safety and Civil Rights

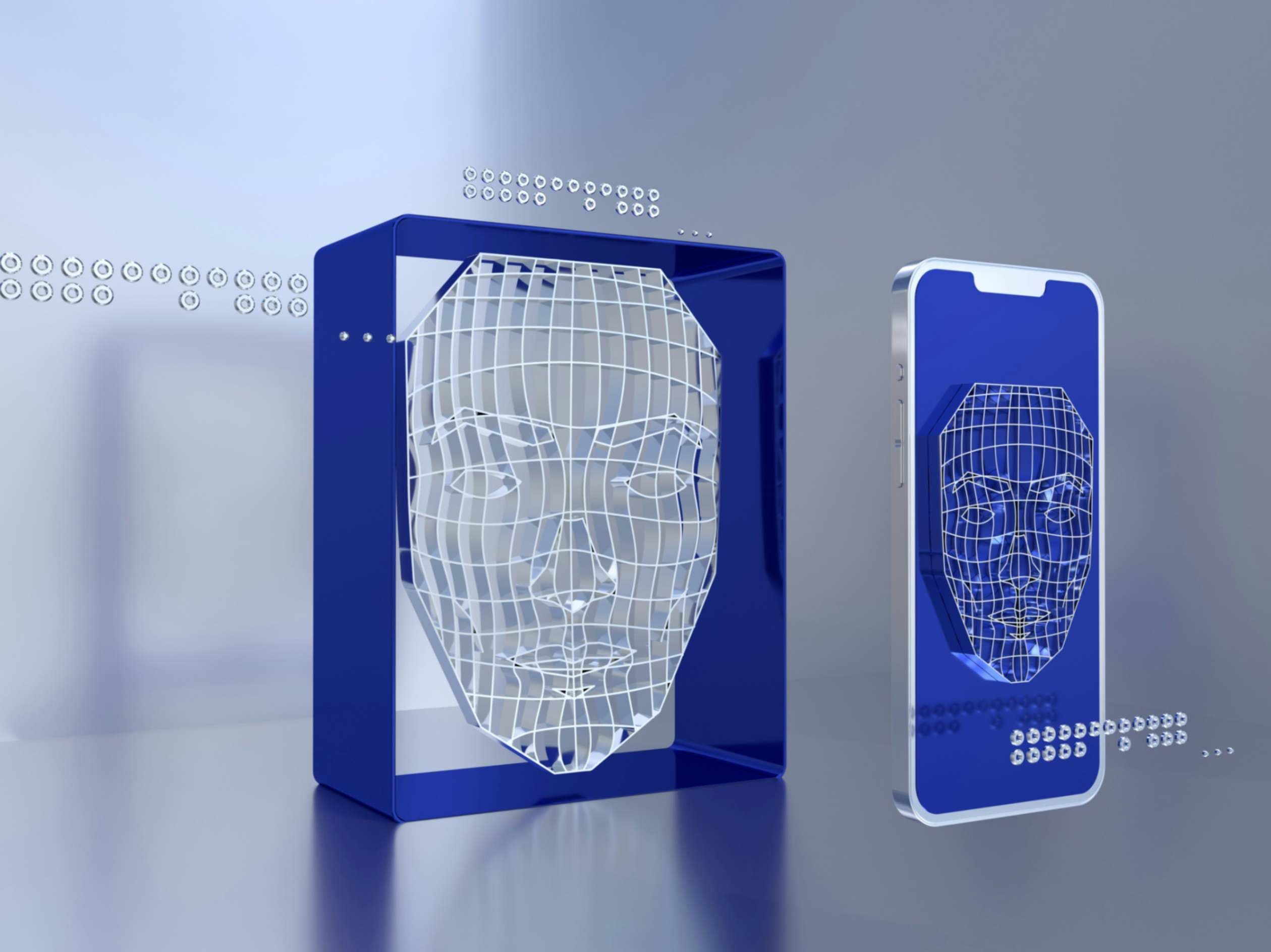

Over the last decade, the deployment of AI-driven facial recognition in law enforcement has expanded rapidly. Agencies across the United States now integrate facial recognition into investigative workflows, using it to match surveillance footage against driver’s license databases, mugshot repositories, and even social media images. The Pinellas County Sheriff's Office in Florida, for instance, has used the technology in over 8,000 cases, often relying on a statewide database of more than 30 million images to aid investigations1. These systems allow law enforcement to identify suspects in near real-time, reducing the time and resources required for traditional investigative methods.

Facial recognition also plays a significant role in locating missing persons and verifying identities during emergencies. The New Delhi Police in India used an AI-based facial recognition system to identify and reunite thousands of missing children with their families in 20182. In the United States, the Department of Homeland Security has tested facial recognition at airports to verify passenger identities, improving both security and efficiency3. These examples illustrate how the technology, when used responsibly, can be a powerful tool for enhancing public safety.

Controversies and Documented Errors

Despite its benefits, facial recognition has been subject to significant criticism due to high-profile errors and systemic biases. In 2020, Robert Williams, a Black man from Michigan, was wrongfully arrested after a facial recognition system incorrectly matched his face to surveillance footage from a shoplifting incident4. This case highlighted concerns about racial disparities in algorithmic accuracy. Studies by the National Institute of Standards and Technology (NIST) found that many commercial facial recognition systems exhibit higher false positive rates for African American and Asian faces compared to white faces5.

Beyond misidentification, there are concerns about the unchecked use of the technology in public spaces. In 2019, it was revealed that the Metropolitan Police in London used live facial recognition cameras during public events without sufficient public notice or transparency6. Civil liberties advocates argue that such practices create a chilling effect on free speech and assembly. These controversies have prompted several cities, including San Francisco, Boston, and Portland, to ban or restrict the use of facial recognition by municipal agencies7.

Legal Considerations: Privacy, Consent, and Admissibility

The legal framework around facial recognition is still evolving, with many jurisdictions lacking clear statutes that govern its use. A key concern is whether individuals must consent to having their biometric data collected and analyzed. In Illinois, the Biometric Information Privacy Act (BIPA) requires companies to obtain informed consent before collecting biometric identifiers such as facial scans, leading to multiple lawsuits against firms that violated this provision8. Municipal governments considering the use of facial recognition must be aware of state-level statutes and ensure compliance to avoid costly litigation.

Another challenge lies in the courtroom. The admissibility of facial recognition evidence can be contested, especially if the underlying algorithm is proprietary and cannot be independently reviewed. Defense attorneys have argued that without transparency into how the AI reaches its conclusions, the technology fails to meet standards for evidentiary reliability under Daubert or Frye tests9. To mitigate these issues, agencies should favor vendors willing to provide algorithmic documentation and make their systems subject to independent validation.

Practical Oversight Mechanisms for Responsible Use

For municipal agencies deploying facial recognition, strong governance frameworks are essential. Independent audits should be conducted regularly to assess both technical performance and policy compliance. These audits must include accuracy testing across demographic groups to identify and address potential biases. The New York Police Department, for example, responded to criticism by updating its policies to restrict the use of facial recognition to investigative leads, not probable cause for arrest10. Such policy refinements can help reduce misuse and increase public confidence.

Transparency is equally important. Agencies should publish transparency reports detailing the frequency, purpose, and outcomes of facial recognition deployments. Public disclosure builds trust and encourages constructive dialogue with civil society groups. Additionally, establishing civilian oversight boards with the authority to review and advise on surveillance technology use can provide a crucial check on operational practices. These boards should include technologists, legal experts, and community representatives to ensure balanced perspectives.

Balancing Public Safety with Civil Liberties

The tension between safety and privacy is not new, but AI-based surveillance technologies amplify the stakes. Facial recognition offers real benefits in terms of investigative speed and efficiency, but without rigorous oversight, it risks eroding public trust and infringing on constitutional rights. Municipal leaders must engage in deliberate governance, ensuring that security tools are deployed in ways that respect civil liberties and maintain democratic accountability.

Striking the right balance requires sustained investment in policy development, stakeholder engagement, and technological literacy within local government. Agencies must resist the temptation to adopt new tools without fully understanding their implications. By building oversight mechanisms into procurement, training, and deployment processes, municipalities can harness the capabilities of facial recognition while safeguarding the fundamental rights of the communities they serve.

Bibliography

Garvie, Clare, Alvaro Bedoya, and Jonathan Frankle. "The Perpetual Line-Up: Unregulated Police Face Recognition in America." Georgetown Law Center on Privacy & Technology, 2016.

Chowdhury, Nandita. "Delhi Police Reunites Thousands of Missing Children Using Facial Recognition." NDTV, April 22, 2018.

U.S. Government Accountability Office. "Facial Recognition Technology: Current and Planned Uses by Federal Agencies." GAO-21-518, August 2021.

Hill, Kashmir. "Wrongfully Accused by a Face: A Black Man’s Arrest Shows Facial Recognition’s Dangers." The New York Times, June 24, 2020.

Grother, Patrick, Mei Ngan, and Kayee Hanaoka. "Face Recognition Vendor Test (FRVT) Part 3: Demographic Effects." National Institute of Standards and Technology, NISTIR 8280, December 2019.

Liberty. "Metropolitan Police Use of Facial Recognition Technology." Liberty Human Rights Briefing, January 2020.

Conger, Kate. "San Francisco Bans Facial Recognition Technology." The New York Times, May 14, 2019.

Illinois General Assembly. "Biometric Information Privacy Act," 740 ILCS 14/1, 2008.

Selinger, Evan. "What Happens When Employers Can Read Your Facial Expressions?" The New York Times, October 17, 2019.

New York Police Department. "Facial Recognition Policy." NYPD Official Website, updated March 2020.

More from Artificial Intelligence

Explore related articles on similar topics