Code Meets Community: The Human Side of AI in Local Government

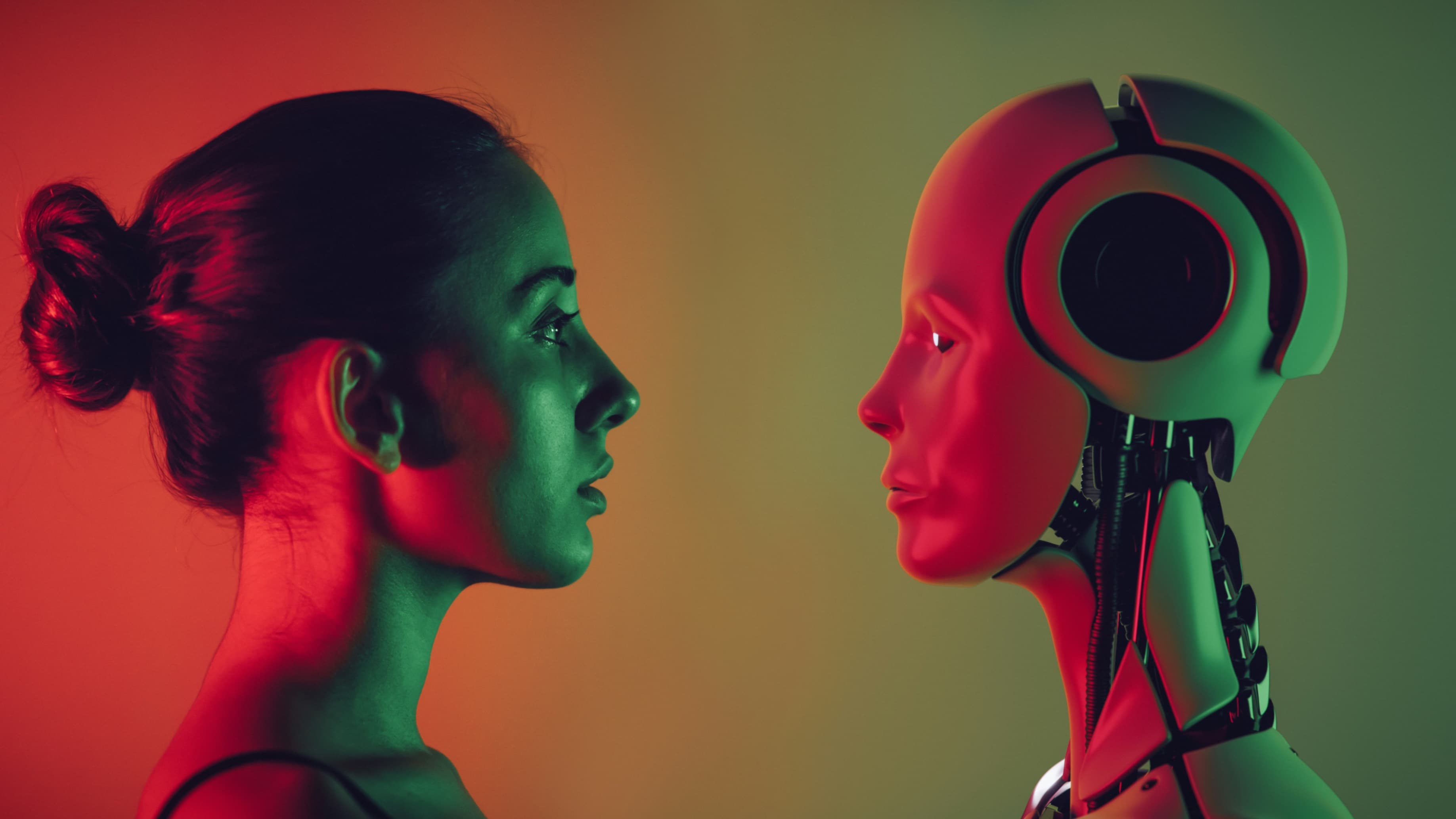

Integrating artificial intelligence into the daily work of local governments requires a focus on solving specific service delivery challenges. AI becomes most effective when it augments existing workflows rather than replacing them. For example, natural language processing (NLP) can be embedded in 311 systems to automatically categorize and route service requests, reducing response time and freeing up staff to handle more complex issues. Some cities have used machine learning to predict which streets are most likely to require pothole repairs based on historical maintenance data, weather patterns, and traffic volume, allowing for preventive maintenance strategies that save money and time over the long term1.

To implement AI tools effectively, local agencies must start with a clear definition of the problem they are trying to solve. Whether it's reducing emergency response times or improving permit processing, the goal should drive the technology choice. AI should be seen not as a product but as a capability that supports targeted improvements. This means involving frontline staff early in the design process, validating model outputs through pilot phases, and iterating based on user feedback. By embedding AI in the context of actual service delivery, governments can better ensure that the tools are meaningful, efficient, and adopted at scale2.

Ensuring Accountability and Ethical Governance

The use of AI in government operations introduces new dimensions of accountability. Transparency about how algorithms function, what data they rely on, and how decisions are made is essential to maintaining public trust. For example, when an AI model is used to prioritize building inspections, residents should be able to understand what factors the system considers and how those factors were selected. This level of transparency requires documentation, explainability tools, and a commitment to open communication with the community3.

Ethical use of AI also demands robust oversight mechanisms. Governments should establish internal review boards or cross-departmental working groups that assess proposed AI applications through the lenses of equity, privacy, and long-term impact. These groups can evaluate whether data used in training models accurately represents the communities being served, and whether outcomes reinforce or reduce existing disparities. Embedding these checks within procurement, implementation, and evaluation phases ensures that AI remains a tool of public benefit, not harm4.

Building Internal Capacity and Data Readiness

A critical success factor for deploying AI effectively is the readiness of an agency’s data infrastructure. Many local governments still struggle with fragmented data systems, inconsistent data standards, and legacy platforms that inhibit integration. Before investing in AI solutions, agencies should prioritize data governance practices, including data cleaning, metadata standards, and interdepartmental data sharing protocols. These foundational steps enable machine learning models to learn from accurate, consistent information and produce reliable outputs5.

Equally important is investing in human capacity. Training staff on AI fundamentals, data literacy, and ethical design empowers them to participate in development and oversight. Some jurisdictions have created in-house innovation teams or partnered with academic institutions to build applied AI skills across departments. These partnerships help bridge technical gaps while keeping public values at the center of the work. When staff understand how models function and how to interpret their outputs, they are better equipped to make informed, accountable decisions6.

Focusing on Equitable and Community-Centered Use Cases

AI’s most promising applications are those that help governments better understand and respond to the needs of their communities. AI can identify service deserts by analyzing geospatial data on public amenities, transit access, and demographic trends. This allows officials to allocate resources more equitably, such as opening new health clinics in underserved neighborhoods or adjusting transit routes to match shift-worker travel patterns. These are not theoretical possibilities but are being piloted in cities that have committed to data equity frameworks and inclusive engagement strategies7.

Engaging residents in the design and oversight of AI applications strengthens both legitimacy and effectiveness. Community advisory boards, public data workshops, and transparent feedback loops can ensure that marginalized voices are included in shaping how AI is used. For instance, before deploying predictive analytics in eviction prevention programs, some cities have convened tenants’ rights groups, legal aid providers, and landlords to assess potential risks and unintended consequences. These participatory approaches help align technology decisions with lived experiences and local priorities8.

Starting Small and Scaling Responsibly

For governments new to AI, starting with small, well-defined pilots allows for learning without outsized risk. A good starting point is automating low-risk, high-volume tasks such as tagging and sorting public records or triaging non-emergency service requests. These use cases can demonstrate value quickly, build organizational confidence, and surface operational insights that inform future investments9.

Scaling AI responsibly requires a phased approach. After initial pilots, agencies should conduct post-implementation reviews to assess performance, equity impacts, and user satisfaction. Lessons learned should be documented and shared internally and with peer governments. Interjurisdictional collaboration, such as through regional AI working groups or national learning networks, can help smaller agencies benefit from the experience of early adopters. This peer learning model has been especially valuable in areas like traffic safety analytics and housing stability interventions10.

Bibliography

National League of Cities. “Artificial Intelligence Use Cases in Local Government,” 2023. https://www.nlc.org/resource/artificial-intelligence-use-cases-in-local-government/.

Government Technology. “How Cities Are Using AI to Improve Operations,” 2022. https://www.govtech.com/products/how-cities-are-using-ai-to-improve-operations.html.

World Economic Forum. “Responsible Limits on Facial Recognition,” 2021. https://www.weforum.org/reports/responsible-limits-on-facial-recognition/.

AI Now Institute. “Algorithmic Accountability Policy Toolkit,” 2020. https://ainowinstitute.org/aap-toolkit.pdf.

Data-Smart City Solutions. “Data Governance in the Public Sector,” 2023. https://datasmart.ash.harvard.edu/news/article/data-governance-in-the-public-sector.

Center for Government Excellence at Johns Hopkins University. “Developing Data Capacity in Local Government,” 2022. https://govex.jhu.edu/what-we-do/developing-data-capacity/.

Urban Institute. “How AI Can Advance Equity in Local Government,” 2021. https://www.urban.org/research/publication/how-ai-can-advance-equity-local-government.

Partnership on AI. “Participatory Approaches to AI Governance,” 2022. https://www.partnershiponai.org/report/participatory-approaches-to-ai-governance/.

Bloomberg Center for Public Innovation. “AI Tools for City Service Improvement,” 2023. https://publicinnovation.jhu.edu/resources/ai-tools-for-city-service-improvement/.

Code for America. “AI in Human Services: Lessons from Pilot Programs,” 2022. https://codeforamerica.org/news/ai-in-human-services-lessons-from-pilot-programs/.

More from Artificial Intelligence

Explore related articles on similar topics